How well can we predict life trajectories?

The explosion of computing power, the ever-increasing availability of data and the large inflow of people in fields like data science and machine learning, have led to successes in various fields. In the social sciences, we increasingly see applications such as policy makers using predictive models in the context of criminal justice or child protective services.

-

dr. Louis Raes

Assistant Professor

TiSEM: Tilburg School of Economics and Management

View full profile

TiSEM: Department of EconomicsL.B.D.Raes@tilburguniversity.edu Room K 356

With enough data, computer power and skilled data scientists, one would think that predicting life trajectories in a well-defined context, for example predicting the GPA of children, should not be too difficult.

A recent study, authored by a large, international team of researchers in the Proceedings of the National Academy of Sciences, comes to a sobering conclusion. Over a hundred teams of researchers, with backgrounds in various fields such as sociology, economics, engineering, computer science and physics, competed to make the best predictions of outcomes in a new wave of the Fragile Families and Child Wellbeing study. The best predictions turned out to be only slightly better – at best – than those from a simple benchmark model.

The study design

A small group of researchers affiliated with Princeton University set up a research design popular in machine learning called the common task method. They made use of the fact that data from the latest wave in a high quality longitudinal study called the Fragile Families and Child Wellbeing study was already collected, but not yet publicly accessible.

They then recruited a large and diverse group of researchers to predict the same outcomes (which were not known) using the same data. Participating researchers were free to use any approach they deemed fit. Some used sophisticated machine learning algorithms, others relied on findings in the large available literature to construct prediction models. The researchers could upload their predictions and these predictions were then evaluated with the exact same error metric that exclusively assesses their ability to predict held-out data: data that are held by the organiser and not available to participants.

The results

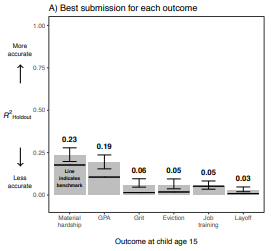

The results were sobering. Even the best predictions, see Figure 1, did not do that well. The Figure shows the holdout-R-squared. This measure equals zero when the accuracy is the same as when using the mean of the training data to make predictions. The measure equals one when one makes completely accurate predictions.

Furthermore, when looking at all the submitted predictions, we can make the following observations. First, teams used very different approaches to make predictions – in terms of data processing as well as statistical learning techniques. However, despite these different approaches, the predictions tend to be relatively similar. The distance between the most divergent predictions was smaller than the distance between the best prediction for each outcome and the truth. Second, for each outcome we observe that some observations are well predicted by all teams, whereas some observations are very poorly predicted by all teams.

Figure 1: This figure was created by Matthew Salganik (Princeton University). This figure is taken from Measuring the predictability of life outcomes with a scientific mass collaboration, published in PNAS on March 30, 2020.

Conclusion

Narrowly interpreted, this study forces social scientists studying life trajectories to reconcile the progress they have made. More than 750 studies have been published using the data from the Fragile Families and Child Wellbeing Study. At the same time, it seems nearly impossible to make accurate predictions using the same data.

More broadly interpreted, this study raises questions around the applications of statistical learning algorithms in other contexts within the social domain. One thing to note is that in this study, a simple benchmark prediction model with a few predictors was only slightly worse than the best prediction and often outperformed many other submissions. So even if one wants to resort to predictive models in the policy domain, one should consider whether complex (often black-box) models are worth their salt.

Finally, the design of this study could be copied by other longitudinal studies in the social sciences. Before releasing a new wave of data, a mass collaboration could be set up to reveal social research problems which can be better solved collectively.

Read the full article: Measuring the predictability of life outcomes with a scientific mass collaboration, published in PNAS on March 30, 2020.

Academic profile: dr. Louis Raes